استشهاد الاخ والزميل عثمان مكاوي

استشهاد الأخ والزميل عثمان مكاوي: خسارة فادحة للصحافة والمجتمع

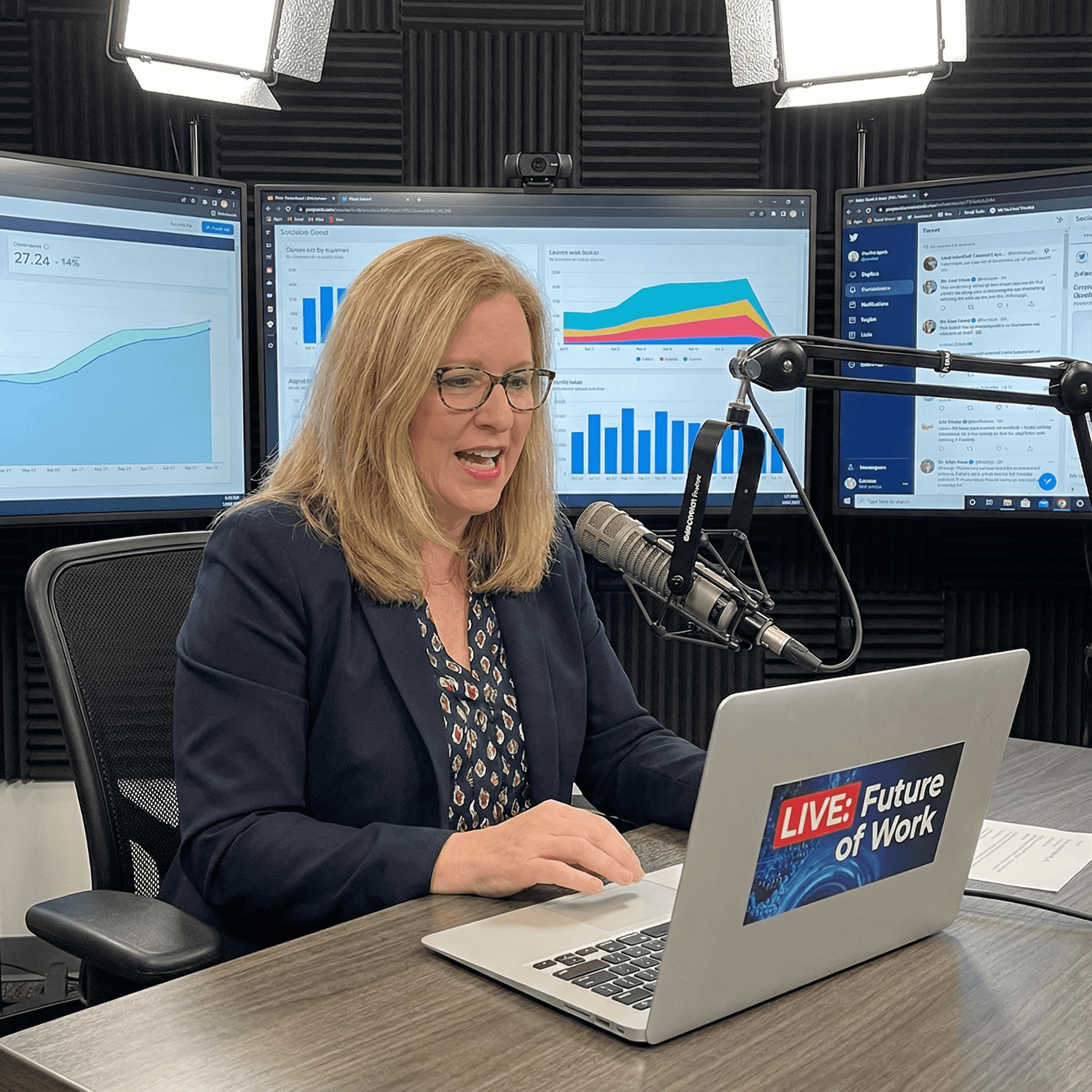

استشهد الصحفي عثمان مكاوي، الذي كان يعد من أبرز الأسماء في عالم الصحافة، خلال تأديته لواجبه المهني في تغطية الأحداث الجارية في المنطقة. هذا الحادث الأليم، الذي وقع يوم أمس، أثار موجة من الحزن والغضب بين زملائه وداخل المجتمع الصحفي بشكل عام. كانت حياة عثمان مكرسة لنقل الحقيقة ومواجهة الظلم، ويشكل استشهاده خسارة فادحة ليس فقط لعائلته وزملائه، بل لكل من يسعى لتحقيق العدالة.

عثمان مكاوي، البالغ من العمر 36 عاماً، عمل في مجال الصحافة لأكثر من 15 عاماً، حيث تغطى العديد من الأحداث المهمة التي شكلت تاريخ بلاده. كان معروفاً بشجاعته واحترافيته، وقد حصل على عدة جوائز تقديرية تقديراً لعمله الدؤوب والمخلص. ومن بين مشاريعه الأكثر بروزًا تغطيته للتظاهرات الشعبية التي شهدتها البلاد، حيث كان دائمًا في الصفوف الأمامية لنقل الصورة الحقيقية.

في حديثه عن عثمان، قال زميله الصحفي أحمد طه: "لقد كان عثمان مثالًا للصحفي الشجاع الذي لا يخشى من مواجهة المخاطر من أجل إظهار الحقيقة. فقد قدم الكثير لمهنته وللمجتمع، واستشهاده يذكرنا جميعًا بالتضحيات التي يقدمها الصحفيون في سبيل العمل على كشف الحقائق".

الحادث الذي أودى بحياة عثمان وقع خلال تغطيته لمظاهرة احتجاجية كانت تطالب بإصلاحات سياسية واقتصادية. خلال المظاهرة، تعرض الصحفيون للتهديدات، حيث أطلقت قوات الأمن قنابل مسيلة للدموع لتفريق المحتجين. ويُعتقد أن عثمان أصيب بطلق ناري أثناء محاولته توثيق الأحداث على الأرض. وقد سارع زملاؤه لنقله إلى المستشفى، إلا أنه توفي متأثرا بإصابته.

يعتبر استشهاد عثمان مكاوي بمثابة نقطة تحول في نقاش الحرية الصحفية وحقوق الصحفيين في البلاد. ففي السنوات الأخيرة، شهدت العديد من الحوادث التي استهدفت الصحفيين، مما أثار تساؤلات حول الأمان وظروف العمل التي يواجهها هؤلاء المراسلون. وقد أصدرت عدة منظمات دولية، بما في ذلك منظمة "مراسلون بلا حدود"، بيانات تدين العنف ضد الصحفيين وتطالب بحماية حقوقهم.

وعلى الرغم من الحزن الذي يخيم على المجتمع الصحفي، إلا أن العديد منهم يتعهدون بمواصلة عملهم في نقل الحقيقة، آملين أن تكون تضحيات عثمان مكاوي دافعًا لتغيير الأوضاع الراهنة. حيث قال رئيس نقابة الصحفيين، فاطمة الزهراء: "لن نسمح لموت عثمان أن يذهب سدى. سنواصل العمل من أجل حرية الصحافة، وسنظل نحارب من أجل العدالة".

في ختام هذا المقال، يبقى عثمان مكاوي رمزًا من رموز الشجاعة والمثابرة في عالم الصحافة. يجب أن يكون استشهاده دافعًا للجميع لتعزيز الجهود من أجل حماية الصحفيين، وضمان بيئة آمنة تسمح لهم بأداء مهمتهم النبيلة دون خوف. إن دم عثمان لن يذهب هباءً، بل سيكون مصدر إلهام للأجيال القادمة من الصحفيين الذين يسعون لكشف الحقيقة وبناء مجتمع أفضل.